The Scoop

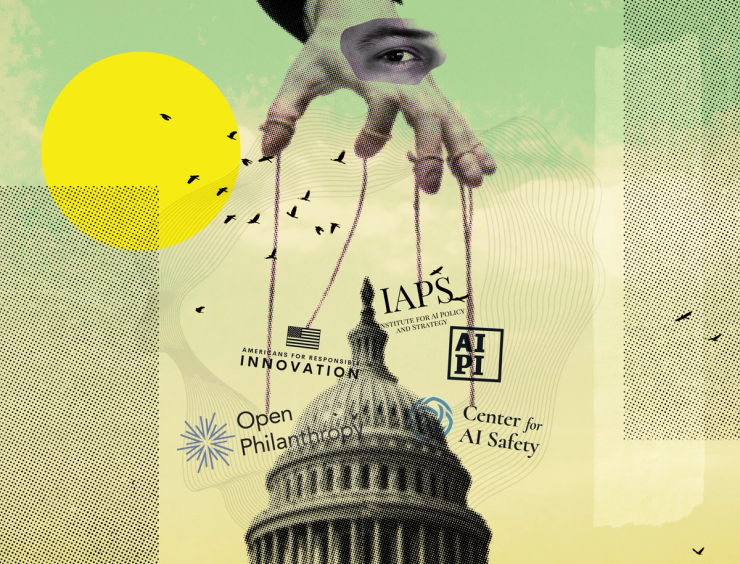

Several well-monied think tanks focusing on artificial intelligence policy have sprung up in Washington, D.C. in recent months, with most linked to the billionaire-backed effective altruism (EA) movement that has made preventing an AI apocalypse one of its top priorities.

Funded by people like Facebook co-founder Dustin Moskovitz, their goal is to influence how U.S. lawmakers regulate AI, which has become one of the hottest topics on Capitol Hill since the release of ChatGPT last year. Some of the groups are pushing for limits on the development of advanced AI models or increased restrictions on semiconductor exports to China.

One previously unreported group was co-founded by Eric Gastfriend, an entrepreneur who runs a telehealth startup for addiction treatment with his father. Americans for Responsible Innovation (ARI) plans to “become one of the major players influencing AI policy,” according to a job listing. Gastfriend told Semafor he is entirely self-funding the project.

Another organization, the Institute for AI Policy and Strategy (IAPS), began publishing research late last month and aims to reduce risks “related to the development & deployment of frontier AI systems.” It’s being funded by Rethink Priorities, an effective altruism-linked think tank that received $2.7 million last year to study AI governance.

That money came from Open Philanthropy, a prolific grant-making organization primarily funded by Moskovitz and his wife Cari Tuna. Open Philanthropy has spent more than $330 million to prevent harms from future AI models, making it one of the most prominent financial backers of technology policy work in Washington and elsewhere. The Center for AI Safety (CAIS), another group funded by Open Philanthropy, recently registered its first federal lobbyist, according to a public filing.

IAPS has been coordinating with at least one organization that has also received funding from Open Philanthropy, the prominent think tank Center for a New American Security (CNAS), according to a person familiar with the matter. IAPS and CNAS did not return requests for comment.

IAPS is not to be confused with the similarly named Artificial Intelligence Policy Institute (AIPI), an organization launched in August by 30-year-old serial entrepreneur Daniel Colson. The group is also aiming to find “political solutions″ to avoid potential catastrophic risks from AI, according to its website.

AIPI said it’s already met with two dozen lawmakers and is planning to expand into formal lobbying soon. Over the last two months, research and polling published by the group have been picked up by a plethora of news outlets, including Axios and Vox.

Colson, who previously founded a cryptocurrency startup as well as a company for finding personal assistants, said that AIPI was initially funded by anonymous donors from the tech and finance industry and is continuing to raise money.

“The center of our focus is on what AI lab leaders call the development of superintelligence,” Colson told Semafor in August. “What happens when you take GPT-4 and scale it up by a billion?” That kind of powerful AI model, he argued, could destabilize the world if not managed carefully.

In this article:

Know More

Thought experiments about a future AI-related catastrophe have long animated followers of effective altruism, a charity initiative that was incubated in the elite corridors of Silicon Valley and Oxford University in the 2010s.

EA initially focused on issues like animal welfare, global poverty, and infectious diseases, but in the last few years, its adherents have begun emphasizing the importance of humanity’s long-term future, especially how it may be disrupted by the dawn of “superintelligence.”

Colson, the leader of AIPI, has recently tried to distance himself from EA, which faced a storm of controversy last year after one of its biggest backers, the cryptocurrency magnet Sam Bankman-Fried, was arrested on fraud charges.

But he was once an active leader in the movement and co-directed a project that aimed to create student groups promoting EA at every college, according to a speaker biography from 2016. At Harvard, one of those student groups was co-founded by Gastfriend, now the leader of ARI.

Louise’s view

Groups like IAPS and AIPI might disagree about some of the specifics, but they are part of the same broad ideological tradition, one that’s primarily concerned about what could happen if AI displaces humans as the most powerful entities on the planet. There are plenty of good arguments for why that shouldn’t be the primary focus of AI policy, and lawmakers will need to also consider viewpoints from outside this bubble if they want to be effective.

The View From China

Chinese authorities released a strikingly detailed draft document earlier this month outlining what the government considers a safety risk when it comes to AI models. It suggests that companies randomly sample 4,000 pieces of content from every database they use to train their systems, and if over 5% contains “illegal and negative information,” the corpus should be blacklisted.

The document also directs companies to make sure their censorship is not too obvious, according to MIT Technology Review writer Zeyi Yang. Some Chinese generative AI programs, such as Baidu’s Ernie chatbot, currently refuse to answer questions related to sensitive topics such as criticism of China’s political system or leadership. The document, which is not law but offers an outline of what future policies could look like, says companies should find appropriate answers for most of these questions instead of just rebuffing them.

Notable

- Politico recently tracked the growing influence that organizations linked to effective altruism are having on AI policy in both the U.S. and U.K.

- Yann LeCun, Meta’s chief AI scientist, dismissed the idea that AI might kill humanity in an interview with the Financial Times this week, calling the idea “preposterous.”

- Semafor previously interviewed the president of the Future of Life Institute, another organization worried about a potential AI apocalypse and funded in part by Open Philanthropy.