The News

After releasing the newest version of its artificial intelligence software, called GPT-4, OpenAI demonstrated what it can do, and how it compares to the current version of ChatGPT.

While the demo, led by OpenAI president and cofounder Greg Brockman, was primarily aimed at developers, it also showed capabilities that everyday users could take advantage of.

Know More

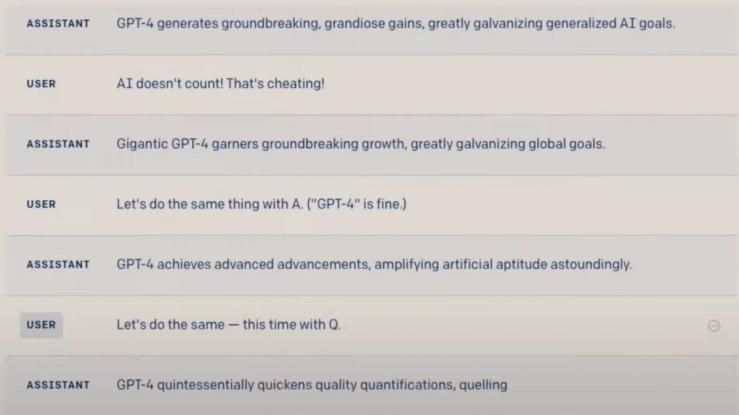

- The new model is more sophisticated at understanding instructions and directions from users. Brockman had GPT-4 summarize a long OpenAI blog post into one sentence, and then had it rewrite the sentence so each word started with the same letter. He tested it with the letters, A, G, and Q, and it returned a grammatically correct sentence each time.

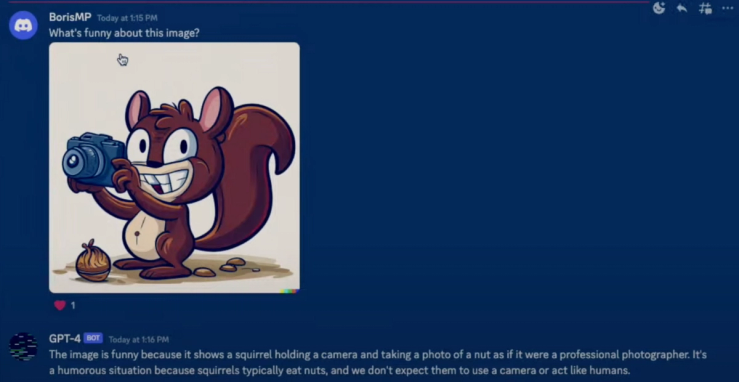

- The new model can analyze and describe photos. GPT-4 perfectly described a screenshot of a Discord channel. It was also able to explain why an illustration of a squirrel taking a photo of a nut was funny. (OpenAI hasn’t yet made the image description feature available to the public.)

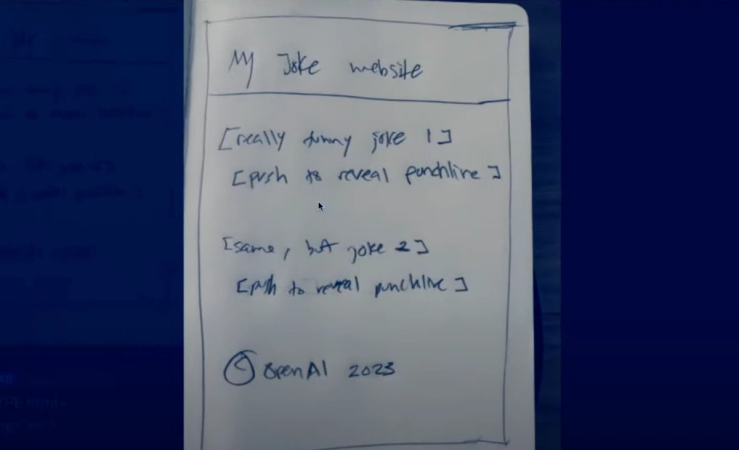

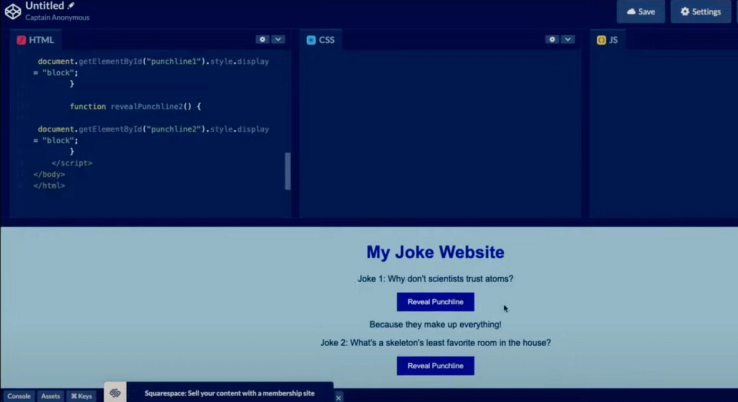

- Brockman took a photo on his phone of a hand-drawn mockup for a simple website he had scribbled in a notebook. GPT-4 was able to convert that drawing into a website using HTML and Javascript code.

- GPT-4 is able to analyze dense passages of text: It could explain an answer to a complex question about taxes after Brockman pasted 16 pages worth of the U.S. tax code. To top it off, GPT-4 also recreated the explanation in the form of a rhyming poem.

GPT-4 is currently available for paid users of ChatGPT Plus.

Open AI said the model is safer and more factual than previous GPT iterations: Still, the company said it has “many known limitations that we are working to address,” including social biases, adversarial prompts, and hallucinations, which is when a chatbot responds confidently with an incorrect answer that’s not based on its training data.