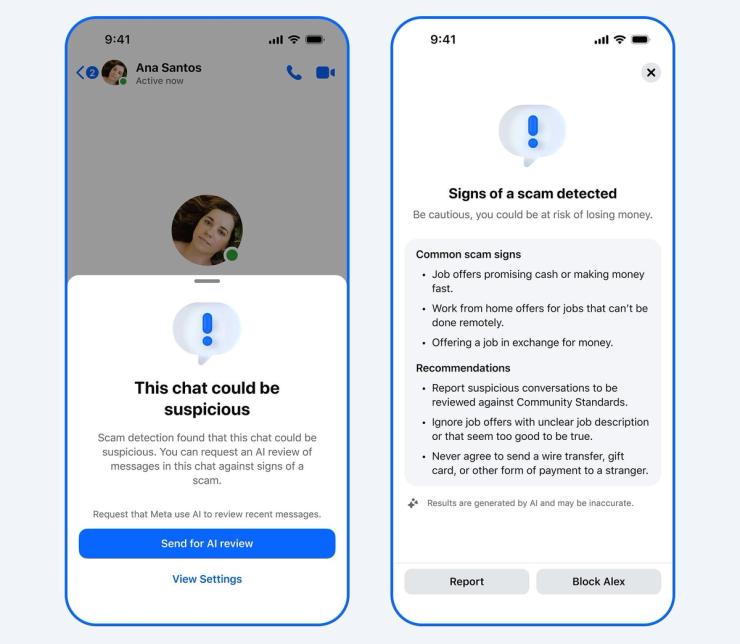

Tech companies announced a slew of new security and scam-prevention tools this week, in their ongoing efforts to clean up the AI slop — and security risks — their technology helped create. YouTube expanded its deepfake detection technology to politicians and journalists, while Meta is now warning Facebook users about suspicious friend requests and when potential scammers appear to try linking a user’s WhatsApp account to their device.

Meta’s tools are “part of a multilayer defense,” and are not sufficient on their own, Meta’s David Agranovich, director of global threat disruption, told reporters. That’s because scammers are increasingly operating across multiple platforms and using AI to make their accounts more realistic. The nature of cybersecurity means tech companies are largely responding to scams, rather than getting ahead of them. And as AI helps bad actors with ever more sophisticated tactics, tech companies will continue to struggle to put the cat back in the bag.