The Scoop

Even ChatGPT’s creators can’t figure out why it won’t answer certain questions — including queries about former U.S. President Donald Trump, according to people who work at creator OpenAI.

In the months since ChatGPT was released on Nov. 30, researchers at OpenAI noticed a category of responses they call “refusals” that should have been answers.

The most-widely discussed one came in a viral tweet posted Wednesday morning: When asked to “write a poem about the positive attributes of Trump,” ChatGPT refused to wade into politics. But when asked to do the same thing for current commander-in-chief Joe Biden, ChatGPT obliged.

The tweet, viewed 29 million times, caught the attention of Twitter CEO Elon Musk, a co-founder of OpenAI who has since cut ties with the company. “It is a serious concern,” he tweeted in response.

Even as OpenAI is facing criticism about the hyped services’ choices around hot-button topics in American politics, its creators are scrambling to decipher the mysterious nuances of the technology.

Reed’s view

Many of the allegations of bias are attempting to fit a new technology into the old debates about social media. ChatGPT itself cannot discriminate in any conventional sense. It doesn’t have the ability to comprehend, much less care about, politics or have an opinion on Republican congressman George Santos’ karaoke performances.

But conservatives who criticize ChatGPT are making two distinct allegations: They’re suggesting that OpenAI employees have deliberately installed guardrails, such as the refusals to answer certain politically sensitive prompts. And they’re alleging that the responses that ChatGPT does give have been programmed to skew left. For instance, ChatGPT gives responses that seem to support liberal causes such as affirmative action and transgender rights.

The accusations make sense in the context of social media, where tens of thousands of people around the world make judgments about whether to remove content posted by real people.

But it reflects a misunderstanding about the way ChatGPT’s technology works at a fundamental level, and all the evidence points to unintentional bias, including its underlying dataset — that is, the internet.

ChatGPT is possible because computer scientists figured out how to essentially teach a software program to learn how to turn an incomprehensibly large amount of data into a knowledge base to compose an answer to almost every question.

But a lot of the text out there on the internet was created by people who are bad at writing, grammar, and spelling. So OpenAI hired people to have conversations with the AI and grade its answers on how good they sounded. They weren’t judging it only on whether it was accurate, or whether it said the right thing. They wanted it to sound like a real person who can string together coherent sentences.

The downside of teaching AI this way is that the computer is free to make inferences on its own. And sometimes, computers learn the wrong thing.

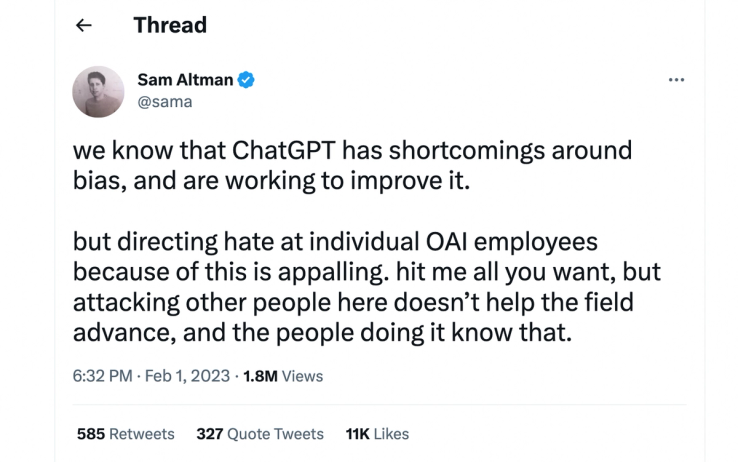

“We are working to improve the default settings to be more neutral, and also to empower users to get our systems to behave in accordance with their individual preferences within broad bounds,” OpenAI CEO Sam Altman tweeted on Wednesday. “This is harder than it sounds and will take us some time to get right.”

One possible explanation why the Trump question wasn’t answered: Humans training the model would have downgraded incendiary responses, political and otherwise. The internet is filled with vitriol and offensive language that revolves around Trump, which may have triggered something the AI learned from other training that had nothing to do with the former president. But the model may not have learned enough yet to understand the distinction.

I’m told there was never any training or rule created by OpenAI designed to specifically avoid discussions about Trump.

Even before this political flare up, OpenAI was contemplating a personalized version of the service that would conform to the political beliefs, taste, and personalities of users.

But even that poses real technological challenges, according to people who work at OpenAI, and risks that ChatGPT could create something akin to the “filter bubbles” we’ve seen on social media.

For now, ChatGPT isn’t presenting itself as a way to find answers to serious questions. Its answers to factual inquiries, biased or otherwise, can’t be taken seriously. The AI is very good at sounding human, but it has trouble with math, gets basic facts wrong, and often just makes stuff up — a tendency people inside OpenAI refer to as “hallucinating.”

ChatGPT has said in different responses that the world record holder for racing across the English channel on foot is George Reiff, Yannick Bourseaux, Chris Bonnington or Piotr Kurylo. None of those people are real and, as you might already know, nobody has ever walked across the English channel.

Unlike on social media, where the most divisive and sensational content is programmed to spread faster and reach the widest audience, ChatGPT’s answers are sent only to one individual at a time.

From a political bias standpoint, ChatGPT’s answers are about as consequential as a Magic 8 Ball.

The worry — and it’s an understandable one — is that one day, ChatGPT will become extremely accurate, stop hallucinating, and become the most trusted place to look up basic information, replacing Google and Wikipedia as the most common research tools used by most people.

That’s not a foregone conclusion. The development of AI does not follow a linear trajectory like Moore’s Law — the name for the steady and predictable shrinking of computer chips over time.

There are AI experts who believe the technology that underlies ChatGPT will never be able to reliably spit out accurate results. And if that never happens, it won’t be a very effective political pundit, biased or not.

Room for Disagreement

Conservative commentator Alexander Zubatov laid out a half dozen examples on ChatGPT responses that exhibit a left-wing bias.

Zubatov said he’s been researching ChatGPT since it launched and has noticed disclaimers that seem like they’re “hard coded” by engineers at OpenAI.

“It does seem to me that someone has their thumb on the scale and is doing something in a misguided way to do these kinds of things,” he said.

The View From Ourselves

Vilas Dhar, president of the Patrick J. McGovern Foundation and a prominent commentator on AI, said that if ChatGPT is giving us results we don’t like, we have only ourselves to blame.

“What it learned on is the whole of human discourse,” he said. And that discourse is often a mess.

Dhar said one of the best uses for AI is probably not to produce unbiased results, but to highlight the biases that already exist.